Mirjam BambergerChief Strategic Development Officer, AXA in Europe & Latin America

September 20, 2022

AI - With Greater Power Comes Greater Responsibility

It was a far-reaching surprise when, over a decade ago, trained models in Artificial Intelligence (AI) turned out not to be as impartial as we were hoping for. Instead, the AI applications lead to institutionalizing and perpetuating existing bias. The risk of hard-coding discrimination into data-led decisions was an uncovered.

4 minutes

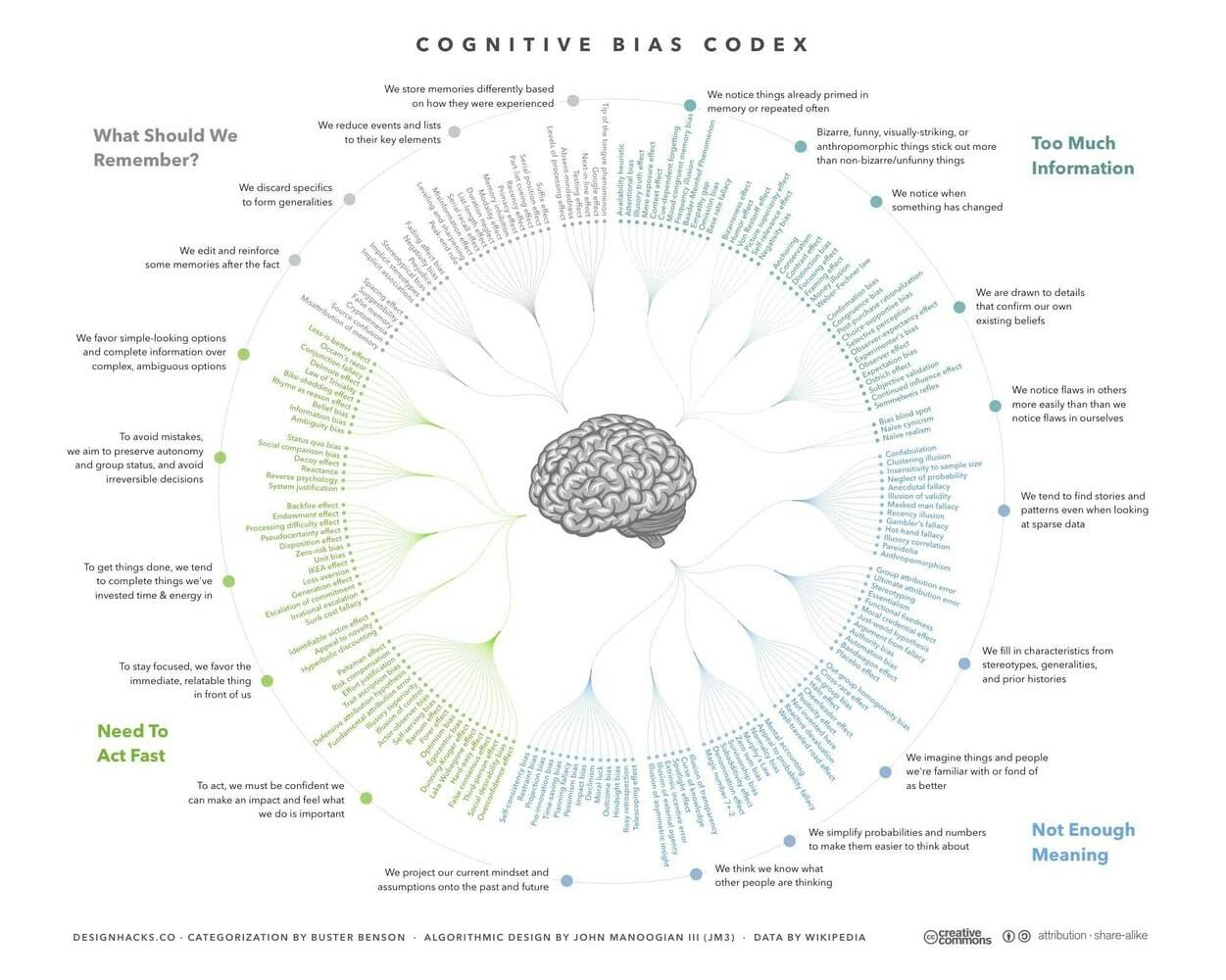

Since then, significant process has been made to eliminate bias from Artificial Intelligence. Multiple measures against bias in models have been proposed. Algorithms were adapted with built-in safeguards to avoid discrimination by design. Yet, the question of what constitutes a bias-free model remains. It turns out not to be a rather difficult question to answer, to say the least.

The only pacifying thought in this arduous task is that data-supported decisions are less bias prone considering that can blur human judgement. You will be aware of the remarkable spectrum of over 175 cognitive biases that carry us through life:

AI transforms how we deliver insurance

All realistic models make mistakes. It is inevitable that, no matter how prudently a model is trained, every once in a while it will lead to wrong decisions. For example, denying a credit to a person who deserves a loan.

Despite such limitations, AI has been shaping the insurance industry over the last few years. We have seen many applications emerging, from detecting skin cancer to insuring self-driving cars. AI can vastly improve operational efficiency, and can change the way insurers work. To give you a few examples:

- Image recognition and natural language processing categorize unstructured data from e-mails effectively and help insurance claims teams to decrease time to settle a customer claim from 5 to 2 days. In specific situations AXA even offers instant payment if desired by our customers.

- Other AI techniques, such as machine learning, resemble traditional statistical modelling and allow to offer usage-based insurances, such as pay-as-you-drive.

- AI can secure faster access to healthcare and achieve better health outcomes. Insurance customers to check health symptoms early and get personalized healthcare recommendations, anytime and anywhere. In fact, AI is recognized nowadays as extremely effective in early skin cancer detection to mention one application that drives prevention at early stage.

With the power of AI comes greater responsibility

With the power of AI comes greater responsibility. As a consequence, AXA created some time ago a research team exclusively dedicated to fairness and safety in AI. The team creates research dedicated to fairness and safety in AI and also engages substantially in partnerships with research centres and around AI fairness. In addition, AXA also recently launched a Responsible AI Circle

and published an practical handbook on the right kind of fairness in AI.

If you take a look at the study, you will soon realize that fairness in AI is one of the most complex topics to tackle. It offers concrete guidance on how to eliminate discrimination by design and avoid hard-coding bias into predictive models. Fairness measures concentrate on reducing disparity between, for example, genders or ethnicities.

- How to balance errors between groups, however, is not clear-cut. It depends on the specific situation, assumptions on the data, the ground truth, policies such as affirmative action.

- And to make things worse, fairness criteria that appear mandatory from an ethical perspective, are internally inconsistent. The abundance of methods and conflicting results is frustrating for practitioners.

- The discouraging reality is that any method, no matter how carefully applied, will break at least one fairness criterion. It will automatically be subject to criticism.

As a minimum, you may be guided by a simple point of reference: When the truth cannot be produced in a trustworthy way neither, never select output. Select fairness definitions which rely on independence. Nearly philosophical, such statement.

Unconditional fairness does not exist

Emerging from the collaborations with the AXA research team, a Fairness Compass was developped. It addresses this information overflow that hampers practitioners to select the fairness measure that is most suitable.

With the Compass, the selection becomes a transparent process based on a set of concrete questions, derived from a systemtatic decision tree. It becomes a straight forward and transparent task to provide a possible chain of arguments in an choice architecture can be expanded and adapted. However, this does not save you from running many experiments on sample scenarios to test your outcomes.

Research in fair machine learning is constantly advancing. New types of fairness definitions will emerge along with real world applications. The Compass is only a first step and a contribution to a general debate on fairness that will advance and mature over time.

As the insurance industry is built of trust. It has a critical role to play to drive AI towards the right kind of fairness in an overarching principle to treat customers in a fair, transparent, and safe way.

Mirjam Bamberger is member of the Management Committee of AXA's European Markets & Latin America. Until January 2022, she has been CEO of AXA Luxembourg and CEO of AXA Wealth Europe. Prior to this she served in various roles as a board member of AXA Switzerland, having completed an international trajectory in High Tech and Financial Services across US, Asia and Europe.

Towards the Right Kind of Fairness in AI

Read the guideThe content of this article reflects the opinions of the author concerned and not necessarily those of the AXA Group